The second "Empowered Design, By 'the Crowd'" article offers insight into making the most out of new crowdsourcing resources.

Over the past decade, crowdsourcing has grown to significance through crowdfunding, crowd collaboration, crowd voting, and crowd labor. The idea behind crowdsourcing is simple: decentralize decision-making by utilizing large groups of people to assist with solving problems, generating ideas, funding, generating data, and making decisions. We have seen crowdsourcing used in both the private and public sectors. In a previous article, "Empowered Design, By 'the Crowd,'" we discuss the significant role crowdsourcing can play in urban planning through citizen engagement.

Crowdsourcing in the public sector represents a more inclusive form of governance that incorporates a multi-stakeholder approach; it goes beyond regular forms of community engagement and allows citizens to participate in decision-making. When citizens help inform decision-making, new opportunities are created for cities—opportunities that are beginning to unfold for planners. However, despite its obvious utility, planners underutilize crowdsourcing. A key reason for its underuse can be attributed to a lack of credibility and accountability in crowdsourcing endeavors.

Crowdsourcing credibility speaks to the capacity to trust a source and discern whether information is, indeed, true. While it can be difficult to know if any information is definitively true, indicators of fact or truth include where information was collected, how information was collected, and how rigorously it was fact-checking or peer reviewed. However, in the digital universe of today, individuals can make a habit of posting inaccurate, salacious, malicious, and flat-out false information. The realities of contemporary media make it more difficult to trust crowdsourced information for decision-making, especially for the public sector, where the use of inaccurate information can impact the lives of many and the trajectory of a city. As a result, there is a need to establish accountability measures to enhance crowdsourcing in urban planning.

Establishing Accountability Measures

For urban planners considering crowdsourcing, establishing a system of accountability measures might seem like more effort than it is worth. However, that is simply not true. Recent evidence has proven traditional community engagement (e.g., town halls, forums, city council meetings) is lower than ever. Current engagement also tends to focus on problems in the community rather than the development of the community. Crowdsourcing offers new opportunities for ongoing and sustainable engagement with the community. It can be simple as well.

The following four methods can be used separately or together (we hope they are used together) to help establish accountability and credibility in the crowdsourcing process:

- Agenda setting

- Growing a crowdsourcing community

- Facilitators/subject matter experts (SME)

- Microtasking

In addition to boosting credibility, building a framework of accountability measures can help planners and crowdsourcing communities clearly define their work, engage the community, sustain community engagement, acquire help with tasks, obtain diverse opinions, and become more inclusive.

Agenda Setting and Growing a Crowdsourcing Community

Agenda setting in the public sector is often a controversial process that does not include the public. However, crowdsourcing is leveraged in a few different ways to increase the community’s role in agenda setting. For instance, administrators in Central Falls, Rhode Island, used the crowdfunding platform Citizinvestor to fund community priorities. Citizens in Central Falls selected new trashcans in the local park as neighborhood priority because trash was littered throughout the park. Sixty-eight people donated money for the new trashcans. The New York Police Department (NYPD) utilizes the crowdsourcing platform IdeaScale to invite specific community to nominate problems or quality of life issues for the police to address. For instance, the 100th Precinct community cited late-night noise concerns coming from a local bar. The police facilitated a meeting between concerned community members and the bar owner, who worked together to reach a mutually acceptable agreement about the noise. Facilitating the crowdsourcing community to come together and decide the issues they want to work on is beneficial to credibility because the issue being worked is what the people want. It also fosters ongoing engagement.

Ongoing engagement also grows the crowdsourcing community. At first, individuals that are interested in a single topic are likely to be the most frequent participants, but others will realize the value in the crowdsourcing platform as achievements take form. This may be common sense, but your most critical asset are the members of your community. For community members to spend their time on crowdsourcing platforms, planners need to gain their trust. When this is done, these efforts will spread amongst their families and friends, which will in turn grow the crowdsourcing community. That growth can be achieved by critically examining how well connected a community it and how this can be enhanced. Ask yourself the following questions:

- Do you offer a two-way dialogue to receive feedback?

- Do you have a team to advocate and ensure needs are met?

Oftentimes, cities are only “pushing” information out to the public, but they do not interact with the public, or for good reason. A common obstacle for productive interactions is a lack of time or manpower, but, also, communicating with the public on social media can get sticky at times. On crowdsourcing platforms, there needs to be someone available to quickly answer questions and provide feedback. This lets the community know that their work is not futile and they are working in tandem with their city officials.

Microtasking and Subject Matter Expects

Is there a code that needs to be developed to run a specific model, or is there data that needs to be curated before it is made available to the public? Microtasking could be the answer.

Microtasking is an exciting feature of crowdsourcing because, taken together, the small actions of a few can have a big impact across networks. Essentially, microtasking is the division of one large task into several smaller tasks. Perhaps the most well-known example of microtasking and its impact is Wikipedia. Wikipedia has flourished on the public’s willingness to add small bits of information to create a database of information on any and everything for the world to consume for free. Crowdsourcing platforms such as CDCology, PhillyTreeMap, and Smithsonian Transcription Center also offer opportunities for microtasking. Digital volunteers with the Smithsonian Transcription Center make historical documents more accessible by learning how to accurately transcribe field notes, diaries, ledgers, logbooks, manuscripts, and biodiversity specimens.

The most valuable aspect of microtasking is that experts or laypersons can make meaningful contributions to projects with low commitment while increasing accountability and credibility. Sites that utilize microtask presently use a two- to three-step process for information submission and approval. The processes involve leveraging the time and asset resources of the volunteers and the knowledge resources of subject matter experts (SME) who vet information and train volunteers.

A good example of this is Observations.be, an online crowdsourcing platform developed to monitor biodiversity in Belgium. The platform enables anyone who is registered to enter their observations of a number of species (e.g., birds, mammals, reptiles, insects). Participants enter their observations on a geospatial map that can include photos, sound files, and commentary. To strengthen the credibility of observations, the platform is structured to function through collective action whereby working groups are central to the platform’s function. Each working group has administrators (SME) that are responsible for validating each entry before it is integrated into the Observations.be database. Validation includes a three-step filter protocol that helps mitigate issues of misinformation being published.

The user serves as the first filter against incorrect information by categorizing their observations. If they question or doubt a categorization, they can enter “?” into the category line. In either case, a species coordinator will contact the user to help them identify the specie. The second filter comes in the form of an automatic message sent to a user who has entered a rare species observation. The message will ask the user to answer a series of questions to confirm the observation and provide a prompt to upload a photo to validate the claim. The third filter is a special coordinator (usually a specialist or veteran nature observer), who reviews the entry, questions the user on their observation (if needed), or sends a questionable entry to discussion forums of other specialists or veteran observers to either discard or accept the observation as an entry to be integrated in the larger database.

Facilitators and SMEs can also incorporate editing and fact checking into the accountability system. The infusion of editing and fact checking adds credibility to the crowdsourcing process. We don’t mean to say that facilitators and SMEs are editing community voices, but they are asking for clarification, reflecting clarification, and ensuring that information collected is presented and understood as close as possible to crowdsourcers meaning. This is crucial for making the best out of the information. Crowdsourcers can misremember information (i.e., listing the incorrect street an incident occurred or confusing two streets). It also increases the likelihood that individuals will return to the platform because they will be engaged in conversations beyond their initial post.

Conclusion

For urban planners, crowdsourcing can be appealing because of the instant access to information. Planners play a major role in guiding the development of the community, which should be done in partnership with the community. Planners should consider how they can use crowdsourcing for the good of their city and how to do so credibly. This will not happen all at once, or easily. However, it is worth the effort in the long run.

If you have experience with applying any of these strategies into your crowdsourcing efforts, please leave a comment or send us a note about your experience.

Kendra L. Smith, Ph.D. is a Post-Doctoral Scholar for Public Service and Community Solutions and a research fellow at the Center for Urban Innovation at Arizona State University. Connect with Kendra on Twitter @KendraSmithPhD.

Lindsey Collins is a Masters of Advanced Study Candidate in Geographical Information Systems in the School of Geographical Sciences and Urban Planning at Arizona State University. Connect with Lindsey on Twitter @Lmcoll1 and LindseyCollins.net.

Planetizen Federal Action Tracker

A weekly monitor of how Trump’s orders and actions are impacting planners and planning in America.

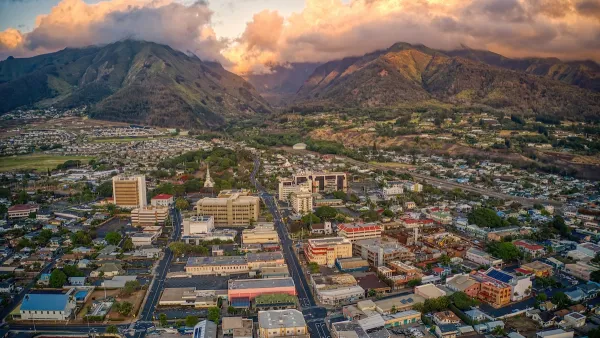

Maui's Vacation Rental Debate Turns Ugly

Verbal attacks, misinformation campaigns and fistfights plague a high-stakes debate to convert thousands of vacation rentals into long-term housing.

San Francisco Suspends Traffic Calming Amidst Record Deaths

Citing “a challenging fiscal landscape,” the city will cease the program on the heels of 42 traffic deaths, including 24 pedestrians.

Defunct Pittsburgh Power Plant to Become Residential Tower

A decommissioned steam heat plant will be redeveloped into almost 100 affordable housing units.

Trump Prompts Restructuring of Transportation Research Board in “Unprecedented Overreach”

The TRB has eliminated more than half of its committees including those focused on climate, equity, and cities.

Amtrak Rolls Out New Orleans to Alabama “Mardi Gras” Train

The new service will operate morning and evening departures between Mobile and New Orleans.

Urban Design for Planners 1: Software Tools

This six-course series explores essential urban design concepts using open source software and equips planners with the tools they need to participate fully in the urban design process.

Planning for Universal Design

Learn the tools for implementing Universal Design in planning regulations.

Heyer Gruel & Associates PA

JM Goldson LLC

Custer County Colorado

City of Camden Redevelopment Agency

City of Astoria

Transportation Research & Education Center (TREC) at Portland State University

Jefferson Parish Government

Camden Redevelopment Agency

City of Claremont