A pilot program in Cal Poly, San Luis Obispo's City and Regional Planning Department explored the potential for an online video course platform to enrich and extend curriculum.

Editor's disclosure: Planetizen Courses, the subject of the white paper shared below, is a Planetizen product. The subscription-based business model of Planetizen Courses means Planetizen has an interest in its financial success. We present this paper, however, with its overwhelming interest to the Planetizen audience in mind. As we see it, the white paper's findings are relevant to the Planetizen audience for at least three reasons:

- The growing prevalence of online education is only beginning to be explored in the planning field. This evaluation furthers the understanding of educators regarding the potential of these tools.

- There is a persistent need to evaluate and deploy all of the resources available to train future generations of planners.

- The status quo of planning education does not always fit with the learning styles and interests of the current generation of planning students, and online training offers new tools for expanding the pedagogical approach to today's students.

The white paper is also available in pdf format.

Teaching Methods in Urban Planning Using Planetizen Courses

Introduction

This paper evaluates a pilot experiment at Cal Poly San Luis Obispo to: 1) increase the learning tools available in a quantitative methods course classroom, transitioning to a high-tech, virtual environment and 2) redesign the curriculum to embrace a self-organized learning environment that guides students to the threshold of complex issues and facilitates self-actualized, liminal moments (Meyer & Land, 2013). The goal of this project, funded through a Promising Practices grant from the California State University (CSU) Chancellor’s Office, was to explore a virtual classroom for learning technical skills in urban planning—alleviating the demands of oversubscribed labs and effectively doubling class capacity.

The two courses included in the pilot were City and Regional Planning (CRP) 213, Methods in Population & Housing and CRP 216, Computer Applications for Planning. CRP 213 teaches population, housing, and employment methods, requiring students to collect data, organize it, and present. The course includes a quantitative lab, where students engage in computational analysis of data using Excel, a program that many students are unprepared to use. CRP 216 offers a basic orientation and introduction to a suite of design software including Photoshop, Sketchup, AutoCAD, and ArcGIS.

Both courses were taught in a lecture-lab format, which research shows may not best facilitate learning for the 21st century student. Many contemporary students are self-organized learners, who want to explore topics and engage in creative discovery on their own and at their own pace (Chow, Davids, Hristovski, Araújo, & Passos, 2011; Meyer & Land, 2013). Furthermore, the traditional format used for these courses is less than optimal for ensuring long-term success and retention (Hsia, Huang, & Hwang, 2015; O’Flaherty & Phillips, 2015). In this environment, students tend to rely on the instructor, peers, and those near them to learn computer-based skills. This environment may serve as an intellectual handicap, preventing them from acquiring the necessary problem-solving skills to complete such tasks on their own in the future.

Background

This pilot occurred in the context of a growing body of research that reveals many teaching techniques do not correspond to the most recent research in student learning styles. Current literature in the science of teaching and learning (SOTL) indicates student may be increasingly responsive to more self-organized or self-directed problem solving over traditional lecture formats (Chow et al., 2011; Kop, Fournier, & others, 2011; Wallner & Menrad, 2012). At the same time many campuses are experiencing enrollment pressures with a need for increasing class size in space-constrained environments—especially for courses that involve computer skills and the use of computer labs (Aspelund & Bernhard, 2015; Cocciolo, 2010; Rizzo & Ehrenberg, 2004).

Methods

Using online videos and quizzes from Planetizen Courses, a cohort of approximately 100 students engaged in a redesigned course that used a virtual lab environment to relay technical or computer-based skills, with only virtual chat help from the instructor. Examples of the skills presented in the class include basic economic analysis, Adobe Photoshop, Google Sketchup, and Geographic Information Systems. Results were gathered using: 1) pre/post surveys, 2) time spent online, and 3) academic performance. This project was entirely funded by the CSU Chancellor's office and received no funding from Planetizen Courses or its affiliated companies.

Setting Up the Courses

To set up an online course, relevant courses were selected to match the curriculum from the Planetizen Courses website. The site offered an online "Educator Tools" interface, where multiple courses could be added and included for a specific group of users. The Educator Tools interface is illustrated in Figure 1. Once the courses were selected and grouped, student accounts were added or associated with the account.

Implementing the Courses

During the first meeting of each of these courses, students were given specific information about the redesigned course to explore self-organized learning environments. The following actions were taken during the initial meeting of each course:

- Language was provided in the syllabus explaining self-organized learning.

- A formative survey was issued, exploring what the students know about the topic, and how comfortable they felt 1) working in a self-organized environment and 2) how often they were allowed to work in such a way.

Students also used the Cal Poly online learning management system (LMS), called PolyLearn, to view the course syllabus and track all daily, weekly, and term-long assignments.

Each week, students were required to watch one or two Planetizen courses, which served either to underscore or enrich in-class lectures. Each Planetizen course had an associated quiz to ensure completion. Customized completion certificates from Planetizen Courses (with student names) were then uploaded to PolyLearn for credit/no credit grading. (Note: Students were required to watch 90 percent of the video course and score 80 percent or better on the Planetizen course quiz to receive credit.)

Assessment

Before beginning the class, students were asked to take a survey to rate their comfort material with the subject material. After completing the course, students were asked to repeat the same survey. Comfort with the material was then compared pre- and post-assessments. While this approach was limited by evaluating student perception of success in the course, SOTL literature has shown it as a valid method that consistently tracks course performance (Dominici & Palumbo, 2013; Miller, Imrie, & Cox, 2014; O’Flaherty & Phillips, 2015). Confidence in the subject area can also be a key learning outcome from courses (Dinsmore & Parkinson, 2013; Hawkins, Graham, Sudweeks, & Barbour, 2013; Komarraju & Nadler, 2013). Still, to alleviate any concerns about this factor, pre- and post-assessments were compared to course performance (i.e., grades) and the minutes each student spent watching the online courses. Qualitative results were also used to evaluate students' perceived value of the online courses in enhancing their educational experience.

Results

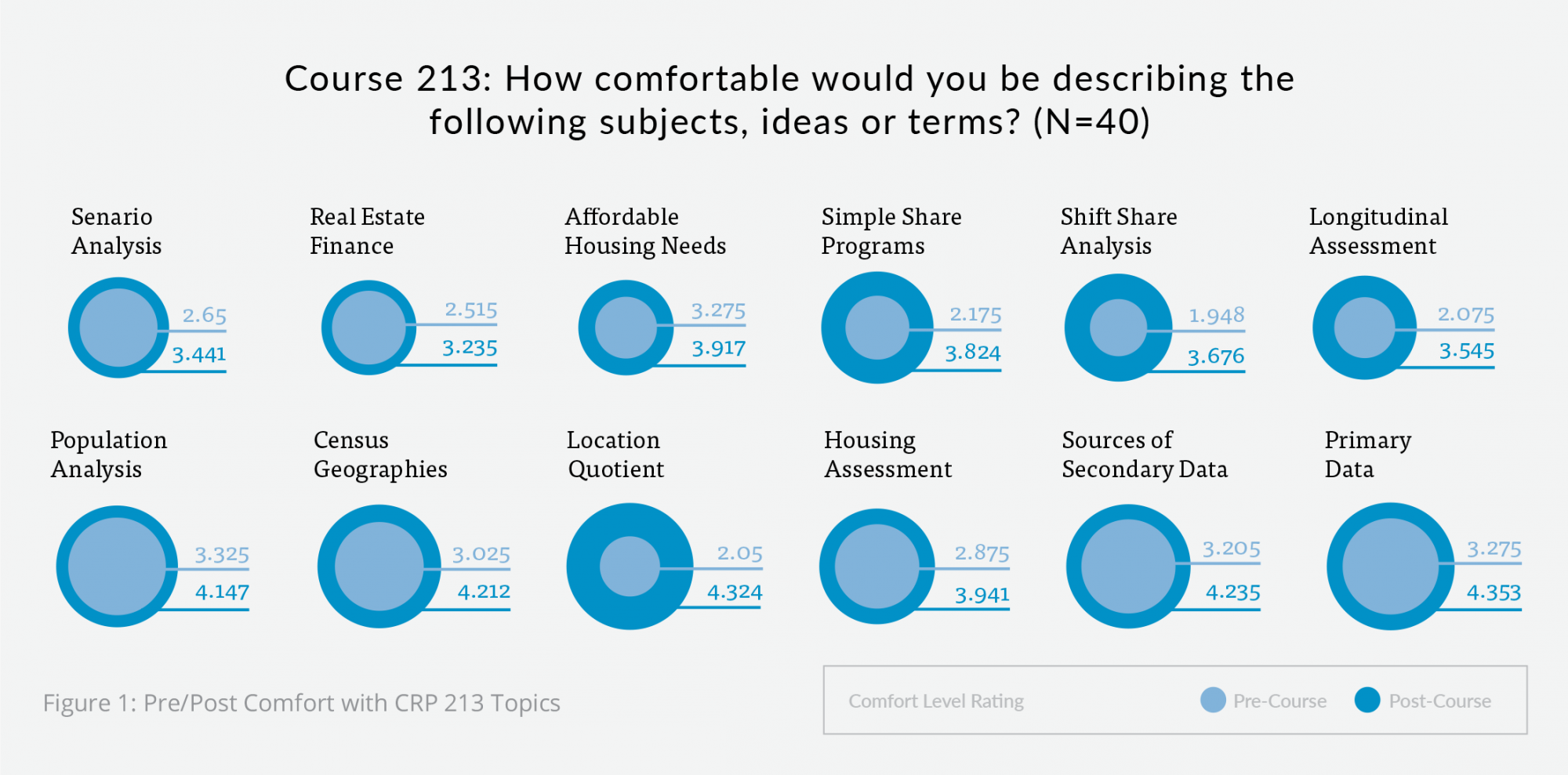

The graphs below provide a summary of the pre- and post-assessment from the course redesign. The students enrolled in the online 213 course dramatically increased their comfort level in every category. For example, students' comfort understanding Location Quotient more than doubled. Material such as understanding primary data, simple share projection, and housing assessment all increased one point.

A majority of students reported liking the (online) lab modules the most. A majority of students also reported that the (online) lab modules contributed the most to learning. One student represented many of the sentiments saying,

"The labs contributed most to my learning because I was forced to work through challenges by myself. I was uncomfortable with [Microsoft] Excel going into the course, and I now feel more equipped with the application, which will definitely come in handy in the future."

Another student added an appreciation of the flexible learning environment by describing what s/he appreciated about the online components:

"I liked how the class was very informal, but it also provided a very nice learning environment."

Alternatively, a handful of students felt that online Planetizen Courses components contributed the least amount to their learning. One student reported: "I learned more from Dr. Riggs in class, and even Dr. Riggs' videos [than I learned from the other course videos]."

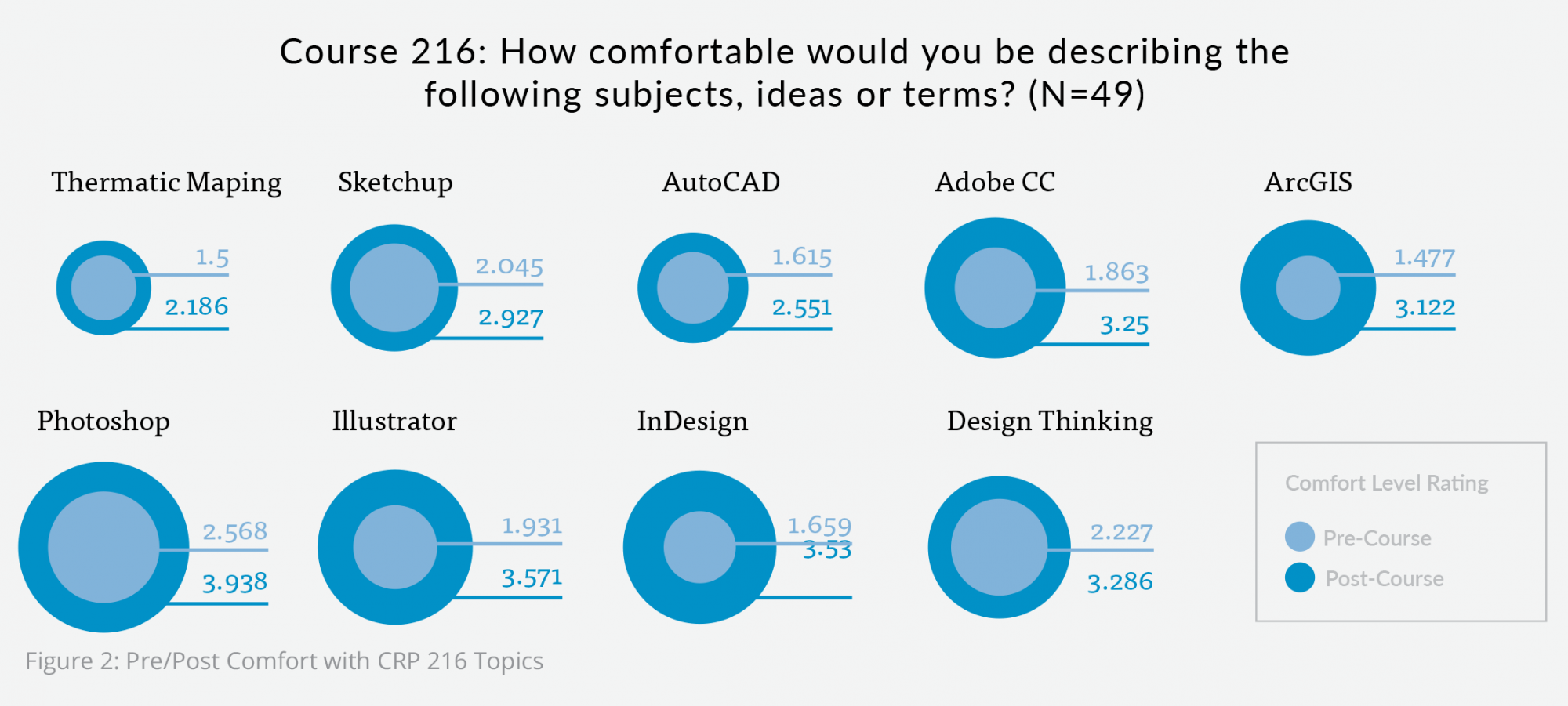

The above graph represents the average pre-course and post-course comfort level score from the CRP 216 pilot. The students enrolled in CRP 216 dramatically increased their comfort level in every category. For example, students' comfort with both ArcGIS and Adobe Creative Cloud more than doubled. The increase in students' confidence in these subjects shows that online classes such as course 216 are an effective medium for teaching.

Students demonstrated command of the software during a capstone project. Most said the capstone project was the most rewarding part of the class. One student noted, "I liked doing the Capstone Assignment because it allowed me to perform what I knew about the programs that we learned about. It tested my abilities."

Additionally, students stated that the quizzes contributed significantly to their learning.

Alternatively, a subset of students responded that the online videos made the least amount of impact on their overall learning. This subset of students felt that the online videos were too long or contained irrelevant information. This was an interesting finding in that many students expected entertainment out of the online system, and some student were more clear in their feedback that they did not always enjoy the video delivery because it was "too much work" or because they "actually had to take notes." We posit that this phenomenon is akin to a "Netflix effect," where the expectation that an onscreen education model has to be movie-like. The expectations of "infotainment" provide an important caution when embarking on this kind of online learning.

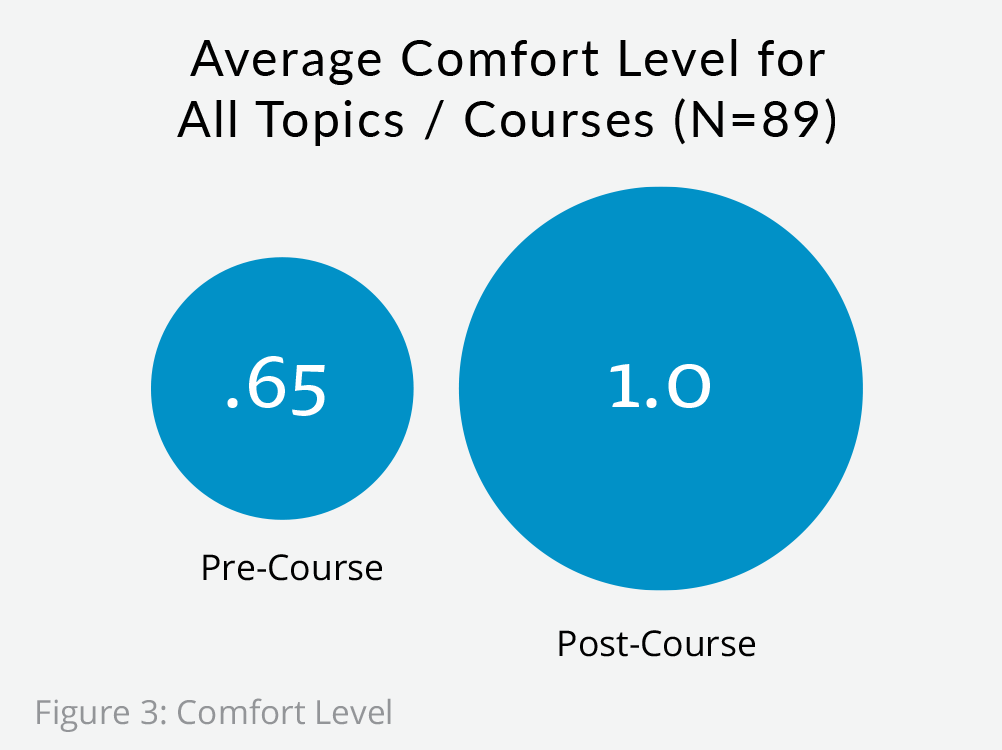

Overall, the results showed that hybridization allows students to engage in self-organized or self-regulated learning, learning at their own pace and allowing for greater attention to be devoted to lab assignments. As shown in Figure X, students reported far more comfort as a whole in both courses.

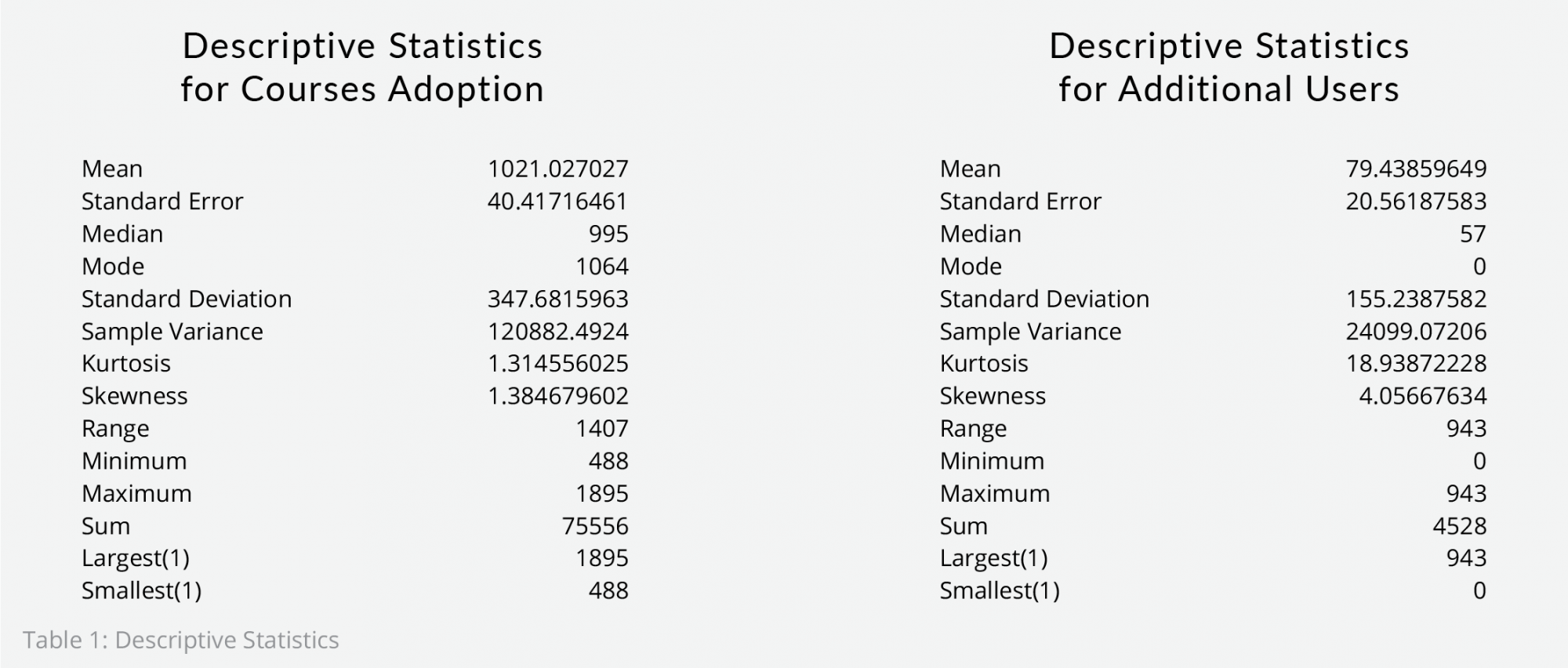

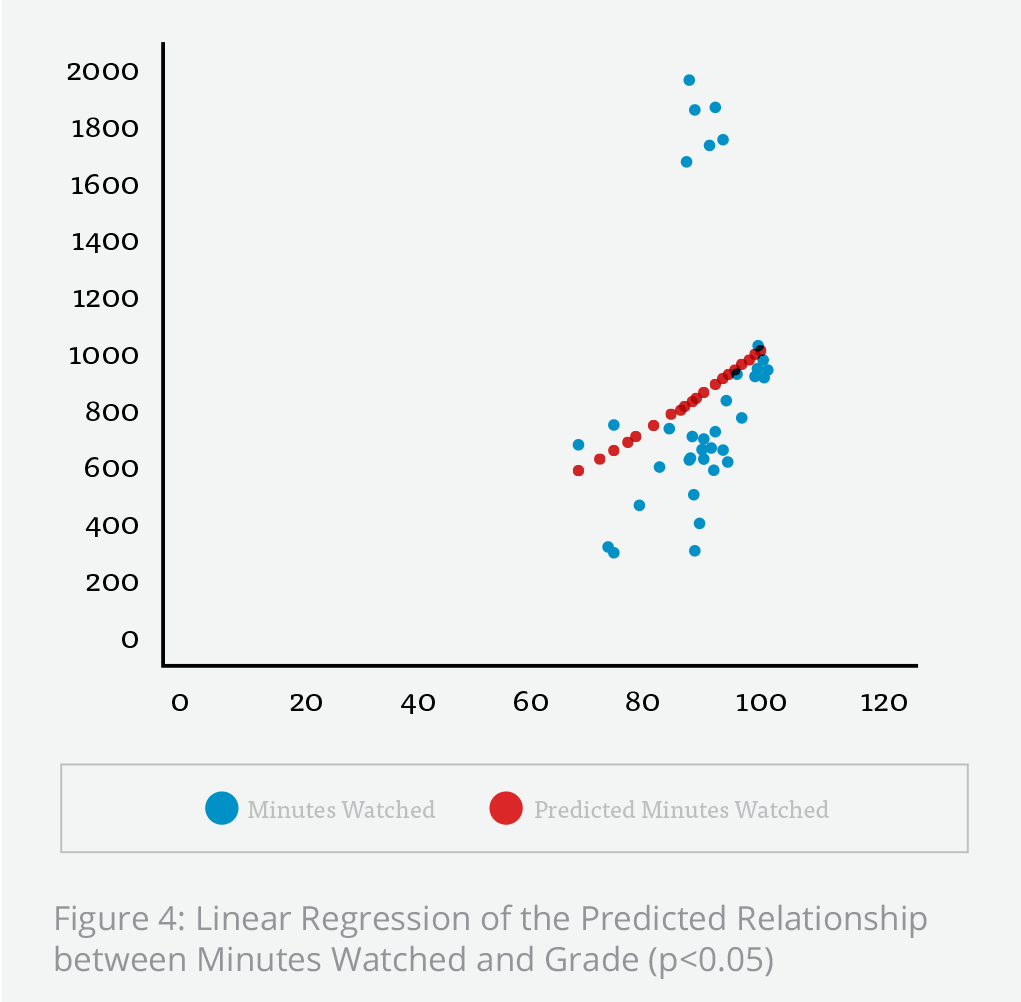

There is a correlation between class project and test grades when compared to total minutes of online education watched in Planetizen Courses. This data provided to instructors by Planetizen Courses as part of the Planetizen Courses "Educator Tools." Table 1 below provides a descriptive summary of the total number of minutes course users and other users spent accessing Planetizen's courses. Students spent a total of 75,556 minutes (1,259 hours) of time on the site during the two pilot courses, for an average of 1,021 minutes (~17 hours) per user.

Table 1 indicates students engaged with the online tool. When combined with student performance, Table 1 also indicates that on average the more time students spent using Planetizen Courses, the better the student performed overall. A linear regression of minutes watched (illustrated in Figure 5) compared to overall course grade reveals that every additional 13 minutes watching courses corresponded to a one-point grade increase (significant at the 95 percent confidence interval). The line plot further illustrates the connection between time spent viewing Planetizen Courses and improved grades. Students that invested time in the online courses showed marked improvement in their proficiency in their technical skillset. Those students gain the potential of spending an increased the amount of time assisting and learning from their peers, a topic of increasing relevance in higher education (Boud, Cohen, & Sampson, 2014).

Discussion and Lessons

In sum, this evaluation indicates that this kind of hybridization empowers students to learn and explore through self-directed problem solving. Hybridization also ensures that students have the necessary skills to complete class-related tasks on their own in the future. The virtual classroom helped guide a larger cohort, particularly in labs, eliminating the spatial dimension of the classroom and allowing for immediate (virtual) proximity between users who may be experiencing the same difficulties in completing lab assignments. This indicated both self-regulated learning, as well as social learning, which possibly led to better teamwork and creativity in the class—as shown in some of the representations of the final projects (Figure 6). Finally, the virtual course allowed valuable space to be allocated to other campus users.

On balance, students appreciated and even craved the format of the course, and felt it would be helpful for scheduling other classes and activities, suggesting the online courses may be helpful for student retention. At the start of the course, most of the students in the two classes expressed an appreciation for the flexible work environment and ability to "work at their own pace."

Students also appreciated the fact that they had the opportunity to accrue real-world experience with the courses, because many Planetizen Courses counted for certification maintenance credit for the American Institute of Certified Planners (AICP). In response to questions about what they were most excited about students said things like:

- "I am most excited about the labs and the ability to work on them at my own pace."

- "Learning things on my own, so that in the future I can work through problems."

- "Lab activities and being able to work through them for more than 3 hours."

- "I think that being able to do the work on my own time and being able to re-watch lectures and rewind if necessary will be very beneficial to my learning."

- "The online portion of the course will help the most, I do a lot of checking back and re-watching so that I may understand the material."

A subset of students did not entirely agree with the positive initial statements. This subset of students appeared to have underestimated the amount of work required a part of the online course, and or also over-estimating their self-motivation. That disconnect is likely related to what was referred to previously as the Netflix effect—students saw the courses as entertainment and needed reminders that they might need to watch online modules more than once and might also have to take notes. They needed reminders that online courses are not always easy and can be quite challenging.

Conclusion

Overall, the course was extremely successful in helping increase student learning while easing the campus burden on computational lab spaces. Students were able to complete labs online and not only gained computational skills but rounded out those skills with additional learning modules. All of the students scored 80 percent or better on each learning module quiz, which represents a noted performance increase. This was at the same time that the class size grew from approximately 25 to 50 students, saving campus space.

There were also unanticipated issues, including issues related to the implementation, the student response to the format, and the standard instructor assessment at the end of the course. While these results are limited in that they cannot indicate longer-term retention or dividend effects (it may be possible that students used the online learning modules for others or that other instructors integrated them into their curriculum), they do indicate an increased understanding of the material. More work would be needed to assess and accurately compare academic performance and longitudinal retention in an online vs. traditional classroom environment, but this pilot indicates that these virtual strategies hold promise for maintaining and expanding curriculum in a future of constrained fiscal and space resources.

William (Billy) Riggs, PhD is an Assistant Professor of City & Regional Planning and a leader in the area of transportation planning and technology, having worked as a practicing planner and published widely in the area. He has over 50 publications and has had his work featured nationally by Dr. Richard Florida in The Atlantic. He is also the principal author of Planetizen's Planning Web Technology Benchmarking Project. He can be found on Twitter @williamwriggs.

References

Aspelund, K., & Bernhard, M. (2015). As Faculty Reaches Largest Size, Departments Face Space Constraints. Retrieved March 26, 2016, from http://www.thecrimson.com/article/2015/2/20/faculty-face-space-constrai…

Boud, D., Cohen, R., & Sampson, J. (2014). Peer learning in higher education: Learning from and with each other. Routledge. Retrieved from https://books.google.com/books?hl=en&lr=&id=dHN9AwAAQBAJ&oi=fnd&pg=PR5&…

Chow, J. Y., Davids, K., Hristovski, R., Araújo, D., & Passos, P. (2011). Nonlinear pedagogy: Learning design for self-organizing neurobiological systems. New Ideas in Psychology, 29(2), 189–200. http://doi.org/10.1016/j.newideapsych.2010.10.001

Cocciolo, A. (2010). Alleviating physical space constraints using virtual space? A study from an urban academic library. Library Hi Tech, 28(4), 523–535.

Dinsmore, D. L., & Parkinson, M. M. (2013). What are confidence judgments made of? Students’ explanations for their confidence ratings and what that means for calibration. Learning and Instruction, 24, 4–14. http://doi.org/10.1016/j.learninstruc.2012.06.001

Dominici, G., & Palumbo, F. (2013). How to build an e-learning product: Factors for student/customer satisfaction. Business Horizons, 56(1), 87–96.

Gomez, S., Andersson, H., Park, J., Maw, S., Crook, A., & Orsmond, P. (2013). A digital ecosystems model of assessment feedback on student learning. Higher Education Studies, 3(2), 41.

Hawkins, A., Graham, C. R., Sudweeks, R. R., & Barbour, M. K. (2013). Academic performance, course completion rates, and student perception of the quality and frequency of interaction in a virtual high school. Distance Education, 34(1), 64–83. http://doi.org/10.1080/01587919.2013.770430

Hsia, L.-H., Huang, I., & Hwang, G.-J. (2015). A web-based peer-assessment approach to improving junior high school students’ performance, self-efficacy and motivation in performing arts courses. British Journal of Educational Technology. Retrieved from http://onlinelibrary.wiley.com/doi/10.1111/bjet.12248/pdf

Komarraju, M., & Nadler, D. (2013). Self-efficacy and academic achievement: Why do implicit beliefs, goals, and effort regulation matter? Learning and Individual Differences, 25, 67–72. http://doi.org/10.1016/j.lindif.2013.01.005

Kop, R., Fournier, H., & others. (2011). New dimensions to self-directed learning in an open networked learning environment. International Journal of Self-Directed Learning, 7(2), 2–20.

Meyer, J., & Land, R. (2013). Overcoming barriers to student understanding: Threshold concepts and troublesome knowledge. Routledge. Retrieved from https://books.google.com/books?hl=en&lr=&id=RCUVmm05qmcC&oi=fnd&pg=PP1&…

Miller, A., Imrie, B., & Cox, K. (2014). Student Assessment in Higher Education: A Handbook for Assessing Performance. Routledge.

O’Flaherty, J., & Phillips, C. (2015). The use of flipped classrooms in higher education: A scoping review. The Internet and Higher Education, 25, 85–95.

Rizzo, M., & Ehrenberg, R. G. (2004). Resident and nonresident tuition and enrollment at flagship state universities. In College choices: The economics of where to go, when to go, and how to pay for it (pp. 303–354). University of Chicago Press. Retrieved from http://www.nber.org/chapters/c10103.pdf

Wallner, T., & Menrad, M. (2012). High Performance Work Systems as an enabling structure for self-organized learning processes. In 2012 15th International Conference on Interactive Collaborative Learning (ICL)(pp. 1–6). http://doi.org/10.1109/ICL.2012.6402047

Yoo, M. S., Son, Y. J., Kim, Y. S., & Park, J. H. (2009). Video-based self-assessment: Implementation and evaluation in an undergraduate nursing course. Nurse Education Today, 29(6), 585–589. http://doi.org/10.1016/j.nedt.2008.12.008

Maui's Vacation Rental Debate Turns Ugly

Verbal attacks, misinformation campaigns and fistfights plague a high-stakes debate to convert thousands of vacation rentals into long-term housing.

Planetizen Federal Action Tracker

A weekly monitor of how Trump’s orders and actions are impacting planners and planning in America.

In Urban Planning, AI Prompting Could be the New Design Thinking

Creativity has long been key to great urban design. What if we see AI as our new creative partner?

King County Supportive Housing Program Offers Hope for Unhoused Residents

The county is taking a ‘Housing First’ approach that prioritizes getting people into housing, then offering wraparound supportive services.

Researchers Use AI to Get Clearer Picture of US Housing

Analysts are using artificial intelligence to supercharge their research by allowing them to comb through data faster. Though these AI tools can be error prone, they save time and housing researchers are optimistic about the future.

Making Shared Micromobility More Inclusive

Cities and shared mobility system operators can do more to include people with disabilities in planning and operations, per a new report.

Urban Design for Planners 1: Software Tools

This six-course series explores essential urban design concepts using open source software and equips planners with the tools they need to participate fully in the urban design process.

Planning for Universal Design

Learn the tools for implementing Universal Design in planning regulations.

planning NEXT

Appalachian Highlands Housing Partners

Mpact (founded as Rail~Volution)

City of Camden Redevelopment Agency

City of Astoria

City of Portland

City of Laramie