Given today it the release date of the new iPhone, I want to talk about something else at Apple the really caught my attention -- their automated customer care. Last week I had to call Apple to find out how to get the sales tax removed from a purchase given our 501(c)3 status. It was a complicated set of questions I needed to ask -- and yet the conversation was as smooth as talking to a live person. It struck me I was getting a sneak preview of something that is going to radically transform how we use technology on a daily basis -- FINALLY.

Given today it the release date of the new iPhone, I want to talk

about something else at Apple the really caught my attention -- their

automated customer care. Last week I had to call Apple to find out how

to get the sales tax removed from a purchase given our 501(c)3 status.

It was a complicated set of questions I needed to ask -- and yet the

conversation was as smooth as talking to a live person. It struck me I

was getting a sneak preview of something that is going to radically

transform how we use technology on a daily basis -- FINALLY.

Like a favorite pair of worn jeans, computing power and the internet

will eventually become an almost effortless part of our lives. Having

grown up with Star Trek, the Jetsons, and the 2001 Space Odyssey, my

generation has been fantasizing about flawless voice recognition for

decades. Most gadgets and applications attempting voice recognition,

however, have been downright comical in their performance. Google voice

search has impressed me numerous times but my experience with Apple

felt like a league above. Their system recognized the context of my

questions even as I stumbled with what to ask and responded with

sentences and a voice that nearly fooled me into thinking I was talking

to a live person.

Wired magazine had a short piece last year on how difficult it has

been to master voice recognition/response given all the nuances that

come with tone, accents, and competing background noise. Our brains do

an amazing job at subconsciously interpreting hard to hear words

through an understanding of context and sentence structure and being

able to filter out noises that are not of interest. We have all had

those calls with automated systems where you're ready to throw the

phone out the window, the interpretation is so bad. Here is one of my

favorites:

Context: A participate in our Wichita Walkshop left a message on our tech support

hotline, which Google Voice then translated and sent me the following

email:

"Yeah,

this is Ben Foster and I was just want to get a hold of somebody from

that which tell walk shop from this afternoon session that hi up they

uploaded some my photos and they gave me instructions how to get 2

months, liquor, but can't seem to find them..."

Just for the record, my staff is not distributing 2 months of liquor,

hi up, to our walkshop participants. If you know "which tell" was

"Wichita" and "get 2 month liquor" was "get them onto Flickr" you can piece together what was really said.

While the next iPhone, and the first generation of the iPad show us

how hardware/software are becoming more and more intuitive in responding

to body movement and touch, adding accurate, effortless voice

recognition, in my opinion, will give us the holy trinity of integrated

technology. Jason's blog on the iPad highlights some of the many ways location

aware, motion and touch sensitive devices can be used in planning and

civic participation. Being able to talk to these devices and have

things interpreted accurately will open up another whole new world of

potential applications. Looking forward to it.

* This blog was posted on PlaceMatters as well.

Planetizen Federal Action Tracker

A weekly monitor of how Trump’s orders and actions are impacting planners and planning in America.

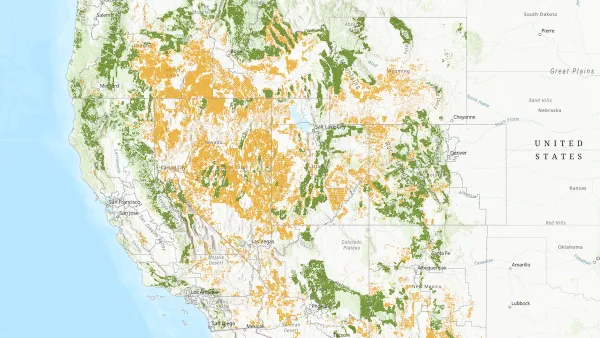

Map: Where Senate Republicans Want to Sell Your Public Lands

For public land advocates, the Senate Republicans’ proposal to sell millions of acres of public land in the West is “the biggest fight of their careers.”

Restaurant Patios Were a Pandemic Win — Why Were They so Hard to Keep?

Social distancing requirements and changes in travel patterns prompted cities to pilot new uses for street and sidewalk space. Then it got complicated.

Platform Pilsner: Vancouver Transit Agency Releases... a Beer?

TransLink will receive a portion of every sale of the four-pack.

Toronto Weighs Cheaper Transit, Parking Hikes for Major Events

Special event rates would take effect during large festivals, sports games and concerts to ‘discourage driving, manage congestion and free up space for transit.”

Berlin to Consider Car-Free Zone Larger Than Manhattan

The area bound by the 22-mile Ringbahn would still allow 12 uses of a private automobile per year per person, and several other exemptions.

Urban Design for Planners 1: Software Tools

This six-course series explores essential urban design concepts using open source software and equips planners with the tools they need to participate fully in the urban design process.

Planning for Universal Design

Learn the tools for implementing Universal Design in planning regulations.

Heyer Gruel & Associates PA

JM Goldson LLC

Custer County Colorado

City of Camden Redevelopment Agency

City of Astoria

Transportation Research & Education Center (TREC) at Portland State University

Camden Redevelopment Agency

City of Claremont

Municipality of Princeton (NJ)