When I was living in Boston the first time, in 1993, I had a conversation with my cousin, a longtime resident, about the then just-starting Big Dig project, putting the Central Artery highways underground (and increasing their capacity). Boston has terrible traffic (and terrible drivers -- I have never been closer to a stress-induced stroke than trying to drive around the Hub in rush hour) and I told my cousin, Jeff, that the Big Dig was a good thing, since it would certainly reduce congestion in the city.

When I was living in Boston the first time, in 1993, I had a conversation with my cousin, a longtime resident, about the then just-starting Big Dig project, putting the Central Artery highways underground (and increasing their capacity). Boston has terrible traffic (and terrible drivers -- I have never been closer to a stress-induced stroke than trying to drive around the Hub in rush hour) and I told my cousin, Jeff, that the Big Dig was a good thing, since it would certainly reduce congestion in the city.

When I was living in Boston the first time, in 1993, I had a conversation with my cousin, a longtime resident, about the then just-starting Big Dig project, putting the Central Artery highways underground (and increasing their capacity). Boston has terrible traffic (and terrible drivers -- I have never been closer to a stress-induced stroke than trying to drive around the Hub in rush hour) and I told my cousin, Jeff, that the Big Dig was a good thing, since it would certainly reduce congestion in the city.

"For a while," Jeff said. "But pretty soon everyone will realize that there's less traffic, and they'll start driving into the city instead of taking the T or the commuter rail. Then it'll be even worse."

In other words, it's long been received wisdom in traffic planning circles that more roads, or wider roads, were a temporary stopgap for stop-and-go.

Now a couple of researchers have modeled why this might be the case. Their program sets up a typical hub-and-spoke model, like a downtown with suburbs, and then adds time to any journey that passes through the downtown. Result? Adding a few roads decreases time on the road, but there's a threshhold effect. Above a certain number, and trip time increases.

I stole this from New Scientist, by the way. And full disclosure: I didn't so much read the research article as look at it. As Barbie once said, "math is hard!"

This conclusion isn't interesting only for itself. It also points out a schism between the way Europeans and US researchers think about traffic. I'm stereotyping here, but in general US planners and thinkers take a wholly pragmatic approach to congestion planning: meters, wider roads, toll roads, and eventually arguments over whether suburbs should just have their own damn downtowns to keep those people off the roads (and this, parenthetically [as you can probably tell from the parentheses] is why Los Angeles is so troubling to planners: nobody works downtown, so where the hell is everyone going that there's so much traffic on the 405?). Best example of this pragmatic approach is the annual mobility report the Texas Transportation Institute puts out. Great data, very wonky.

Europeans, on the other hand, go all complex and chaotic on the problem. They model hubs and spokes. They turn to fluid dynamics and wave propagation to explain why clogs on the road can propagate backward, causing unexplained slowdowns miles upstream from a problem that's long been towed away or bulldozed into a ravine.

Am I generalizing? Sure. I had a tour of the Santa Fe Institute some years back -- it's in the US -- and saw some work going on there trying to use complexity theory to understand traffic. But first of all, it's just a blog, and so what, you're expecting supported theories? But also, neither approach seems to work all that well in clearing up the freeway between the San Fernando Valley and the Los Angeles basin.

What are we all missing?

Planetizen Federal Action Tracker

A weekly monitor of how Trump’s orders and actions are impacting planners and planning in America.

Restaurant Patios Were a Pandemic Win — Why Were They so Hard to Keep?

Social distancing requirements and changes in travel patterns prompted cities to pilot new uses for street and sidewalk space. Then it got complicated.

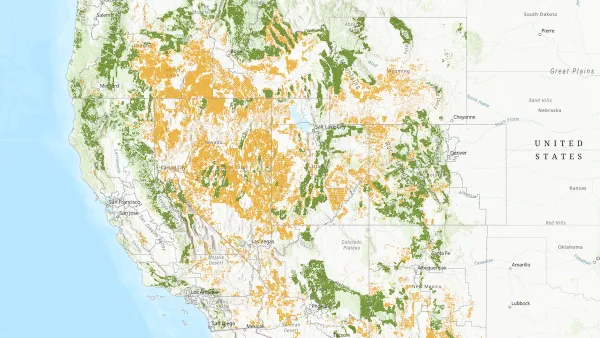

Map: Where Senate Republicans Want to Sell Your Public Lands

For public land advocates, the Senate Republicans’ proposal to sell millions of acres of public land in the West is “the biggest fight of their careers.”

Maui's Vacation Rental Debate Turns Ugly

Verbal attacks, misinformation campaigns and fistfights plague a high-stakes debate to convert thousands of vacation rentals into long-term housing.

San Francisco Suspends Traffic Calming Amidst Record Deaths

Citing “a challenging fiscal landscape,” the city will cease the program on the heels of 42 traffic deaths, including 24 pedestrians.

California Homeless Arrests, Citations Spike After Ruling

An investigation reveals that anti-homeless actions increased up to 500% after Grants Pass v. Johnson — even in cities claiming no policy change.

Urban Design for Planners 1: Software Tools

This six-course series explores essential urban design concepts using open source software and equips planners with the tools they need to participate fully in the urban design process.

Planning for Universal Design

Learn the tools for implementing Universal Design in planning regulations.

Heyer Gruel & Associates PA

JM Goldson LLC

Custer County Colorado

City of Camden Redevelopment Agency

City of Astoria

Transportation Research & Education Center (TREC) at Portland State University

Camden Redevelopment Agency

City of Claremont

Municipality of Princeton (NJ)